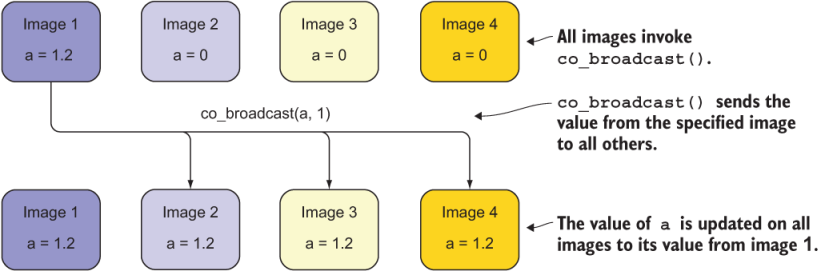

While all images must execute the call to co_broadcast, the specified image acts as the sender, and all others act as receivers. Figure 12.5 illustrates an example of this functioning.

Figure 12.5 A collective broadcast from image 1 to the other three images, with arrows indicating the possible data flow direction

The inner workings of this procedure, including copying of data and synchronization of images, are implemented by the compiler and underlying libraries, so you and I don’t have to worry about them.

The full syntax for invoking co_broadcast is similar to co_min, co_max, and co_sum, except that the broadcast variable isn’t limited to numeric data types. A subtle but important point about collective subroutines is that the variables they operate on don’t have to be declared as coarrays. This allows you to write some parallel algorithms without declaring a single coarray. For an example, take a look at the source code of a popular Fortran framework for neural networks and deep learning at https://github.com/modern-fortran/neural-fortran. It implements parallel network training with co_broadcast and co_sum, without explicitly declaring any coarrays.

Congratulations, you made it to the end! Having now covered teams, events, and collectives, it’s a wrap. Work through the exercises, make a few parallel toy apps of your own, and you’re off to the races. You should have enough Fortran experience under your belt to start new Fortran programs and libraries, as well as to contribute to other open source projects out there. If you’d like to return to our main example, appendix C provides a recap and complete code of the tsunami simulator. It also offers ideas on where to go from here, as well as tips for learning more about Fortran. The amazing world of parallel Fortran programming is waiting for you.