The way the processor industry is going, is to add more and more cores, but nobody knows how to program those things. I mean, two, yeah; four, not really; eight, forget it.

You may be asking, Why write parallel programs? It seems like an unnecessary complication. In general, you’ll want to write parallel programs for two reasons:

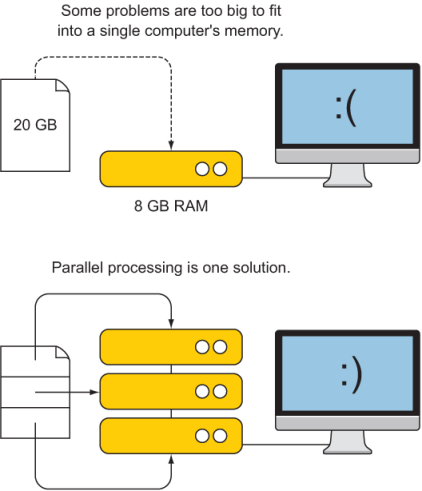

- Too big to fit into memory –Some programs are too big to fit into a single computer’s memory. For example, if you want to calculate statistics such as average and variance on a data file that’s 20 GB, but your computer’s RAM can only fit 8 GB, you may be stuck. The parallel programming solution to this problem is to load only a fraction of the file in each parallel computer, compute the statistics, and only share the intermediate data required to compute the final results.

- Too slow to be useful –Even if your program can fit into memory, it may be too computationally expensive to run on a single CPU. Consider this weather prediction problem: If the forecast for the next day takes 12 hours to compute, it has already lost a lot of its value by the time the program is done. Today’s operational forecast centers are able to deliver forecasts for a week ahead with only a few hours of compute time by distributing the computational workload across hundreds of CPUs.

Figure 7.1 illustrates these challenges.

Figure 7.1 When a problem is too big to fit into a single computer’s memory, one solution is to split the input data and process it in parallel. Because each computer will work on only a fraction of the calculation, this program will also finish in a fraction of the time.

You’ll find that most problems that take very long to compute are also too big to fit into the memory of a single computer. Computational fluid dynamics problems like the tsunami simulator definitely fall into both of these categories. So far, we’ve been solving the shallow water equations over only 100 grid cells. But as we go to 1,000 or 10,000 grid cells for more realistic simulations, we’ll inevitably increase the memory and computational footprint. We’ll soon tackle problem sizes for which parallel programming will significantly reduce compute time.